SOC 2 Type II Compliant

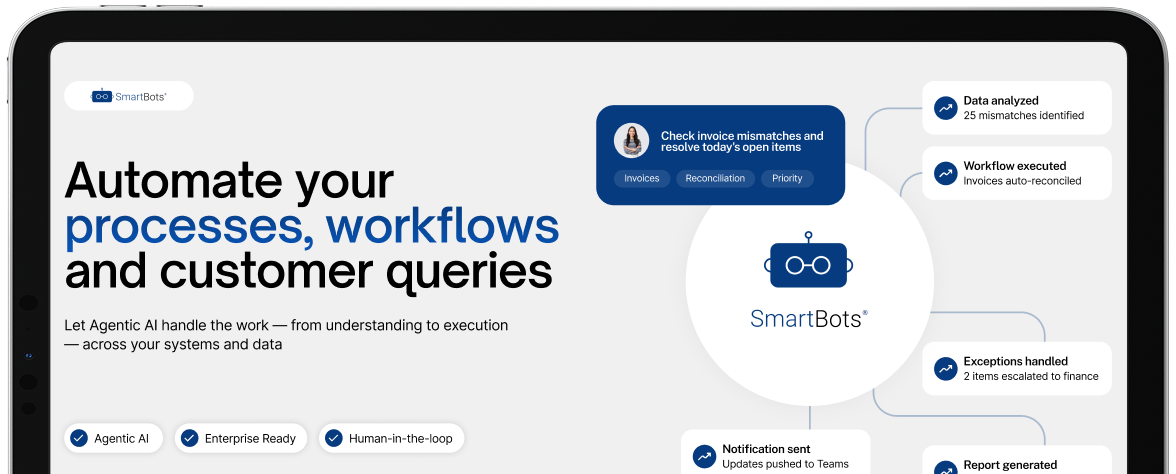

Let Agentic AI handle work – from understanding to execution – across your systems and data.

AI is changing how enterprises operate

No matter where you are in your Agentic AI journey — piloting your first use cases, launching AI in production, or scaling across the enterprise — SmartBots provides the accelerators, tools, and expertise to help you succeed.

Across work and customer interactions, we help you apply AI where it matters most — automating operations, improving decisions, and enhancing every experience across your enterprise.

Automate employee workflows across IT, HR, and finance operations

Simplify everyday work

Reduce time to get answers

Improve decision accuracy

Deliver intelligent, omnichannel customer support at scale

Reduce resolution and handle times

Improve customer satisfaction

Scale support across channels

Automate complex operational workflows end-to-end

Reduce exceptions and manual effort

Enable automated reconciliations

Trigger intelligent escalations

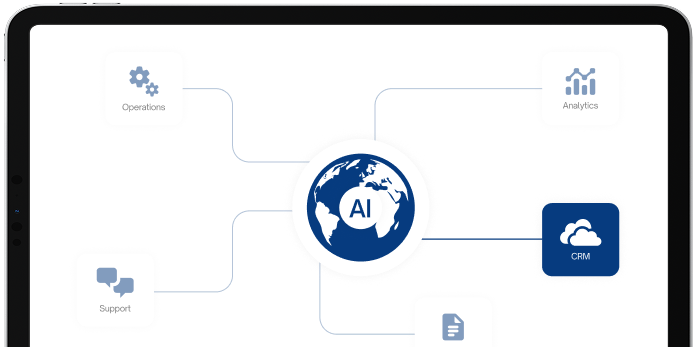

SmartBots combines open AI architecture, enterprise integrations, and proven frameworks to help organizations build, deploy, and scale intelligent agents across their technology ecosystem.

SmartBots helps organizations across industries operationalize Generative AI to automate workflows, unlock insights from data, and enhance decision-making at scale.

Reconciliation workflows

Transaction and risk monitoring

Knowledge assistance

Network monitoring

Workflow automation

Customer support enablement

Inventory visibility

Customer support workflows

Merchandising insights

Predictive maintenance

Quality monitoring

Supply chain intelligence

Knowledge access

Regulatory assistance

Decision support

SOC 2 Type II Compliant

ISO 27001:2013 Certified

PCI DSS Compliant

GDPR aligned

Enterprise-grade encryption across all data flows and AI interactions

Fine-grained access control aligned with identity and enterprise systems

Strict data governance with zero unauthorized usage or retention

Secure deployment across isolated environments including VPC, private cloud, and on-premise

Policy-driven AI behavior with defined guardrails for agent actions and access

Human-in-the-loop workflows for approvals, validation, and oversight

Complete traceability across all AI actions, decisions, and outputs

Lifecycle management across models, prompts, and workflows

Alignment with global standards including ISO 27001, SOC 2, GDPR, and industry regulations

Data residency and control across geographic and regulatory boundaries

Content filtering and safety guardrails for compliant AI outputs

Continuous monitoring to detect anomalies, misuse, and operational risks

Explainable AI decisions with transparency into how outputs are generated

Bias mitigation frameworks to reduce unintended or unfair outcomes

Multi-model flexibility with centralized governance and control

Operational monitoring dashboards for usage, performance, and impact

We design, integrate, and deploy AI into real workflows across your systems.